Sam Altman and Other Giants Say that AI Could Be as Deadly as Pandemics and Nuclear Weapons

May 31, 2023

IBL News | New York

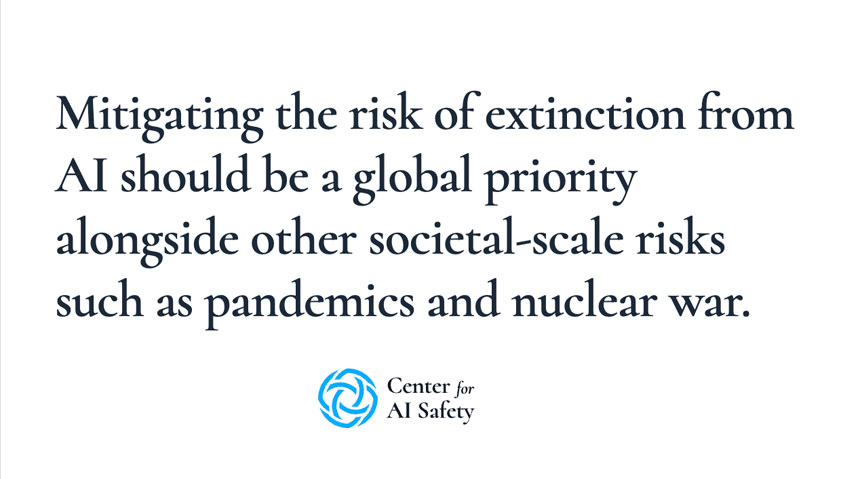

Hundreds of AI scientists, academics, tech CEOs, and public figures, including OpenAI CEO Sam Altman and DeepMind CEO Demis Hassabis, added their names to a one-sentence statement urging global attention to existential AI risk.

The statement, hosted on the website of a San Francisco-based, privately-funded not-for-profit called the Center for AI Safety (CAIS), states:

A short explainer on CAIS’ website explained that the statement has mostly been kept succinct to overcome the obstacle and open up discussion.

“It is also meant to create common knowledge of the growing number of experts and public figures who also take some of advanced AI’s most severe risks seriously,” stated the site.

In the last two months, policymakers have actually heard the self-same concerns, as AI hype has surged off the back of expanded access to generative AI tools like OpenAI’s ChatGPT and DALL-E.

In March, Elon Musk and many other experts released an open letter calling for a six-month pause on the development of AI models more powerful than OpenAI’s GPT-4. This pause would allow for buying time for shared safety protocols to be devised and applied to advanced AI.

Discover more

IBL News is funded by the New York-based, family-owned company ibl.ai. Our stories adhere to the highest ethical standards in journalism and are available to news syndication agencies.