IBL News | San Francisco

Meta last week released a new benchmark open-sourced dataset named FACET (Fairness in Computer Vision Evaluation), which is designed to evaluate and improve fairness in AI vision models.

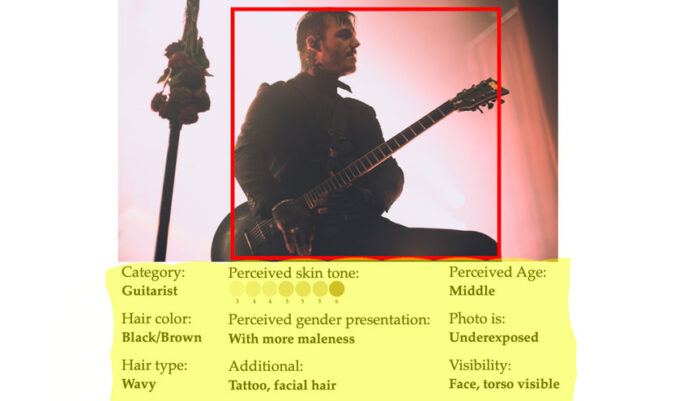

FACET consists of 32,000 images containing 50,000 people labeled by human annotators. It accounts for classes related to occupations and activities like “basketball player” or “doctor”, as shown in the picture above.

“Our goal is to enable researchers and practitioners to perform similar benchmarking to better understand the disparities present in their own models and monitor the impact of mitigations put in place to address fairness concerns,” Meta wrote in a blog post.

“It’s unclear whether the people pictured in them were made aware that the pictures would be used for this purpose,” explained TechCrunch.

In a white paper, Meta said that the annotators were “trained experts” sourced from “several geographic regions”, including North America (United States), Latin America (Colombia), Middle East (Egypt), Africa (Kenya), Southeast Asia (Philippines) and East Asia (Taiwan).

In addition to the dataset itself, Meta has made available a web-based dataset explorer tool.

.

En Español

En Español