OpenAI Drops the Price of API Access for GPT-3.5 Turbo as Open-Source Models Expand

February 1, 2024

IBL News | New York

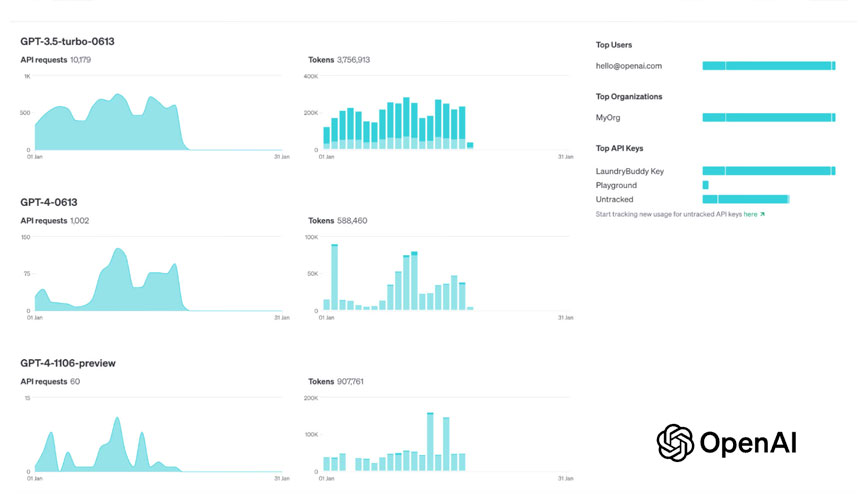

OpenAI reduced this month the prices for GPT-3.5 Turbo, released new embedding models (numbers that represent the concepts), and introduced new ways for developers to manage API keys and understand API usage.

Essentially, the San Francisco-based company is introducing two new embedding models: a smaller and highly efficient text-embedding-3-small model, and a larger and more powerful text-embedding-3-large model.

Pricing for text-embedding-3-small has been reduced by 5X compared to text-embedding-ada-002, from a price per 1k tokens of $0.0001 to $0.00002.

Today, OpenAI introduced a new GPT-3.5 Turbo model, gpt-3.5-turbo-0125. “For the third time in the past year, we will be decreasing prices on GPT-3.5 Turbo to help our customers scale,” said the company.

Input prices are dropping by 50% and output by 25%, to $0.0005 per thousand tokens in and $0.0015 per thousand tokens out.

This model will also have various improvements, including higher accuracy at responding in requested formats and a fix for a bug that caused a text encoding issue for non-English language function calls.

GPT-3.5 Turbo is the model most people interact with, usually through ChatGPT, and it serves as a kind of industry standard now. It’s also a popular API, being lower cost and faster than GPT-4 on a lot of tasks.

Users are using these APIs for text-intensive applications, such as analyzing entire papers or books. OpenAI needs to make sure its customers don’t leave, attracted to open-source or self-managed models.

On the other hand, Langfuse — which provides open-source observability and analytics for LLM apps — reported that it has been calculating costs for OpenAI and Anthropic models since October, as shown below.

.

The updated GPT-3.5 Turbo model is now available.

It comes with 50% reduced input pricing, 25% reduced output pricing, along with various improvements including higher accuracy at responding in requested formats. Happy building! 🏗️https://t.co/l0wF1Vswmm

— Logan.GPT (@OfficialLoganK) February 1, 2024

Discover more

IBL News is funded by the New York-based, family-owned company ibl.ai. Our stories adhere to the highest ethical standards in journalism and are available to news syndication agencies.