IBL News | New York

Meta announced last week a Generative AI model named CM3LEON that the company claims achieves state-of-the-art performance for text-to-image generation in high resolution. The company didn’t say whether — or when — it plans to release CM3Leon.

CM3LEON is also one of the first image generators capable of generating captions for images, laying the groundwork for more capable image-understanding models going forward, Meta says.

“What sets CM3LEON apart is its robust multimodal architecture and training. By leveraging large-scale datasets that encompass diverse textual and visual data, CM3LEON has acquired a deep understanding of the intricate relationship between words and images. This comprehensive training enables CM3LEON to generate and manipulate images with remarkable coherence and fidelity,” added the company.

Image generators like OpenAI’s DALL-E 2, Google’s Imagen, and Stable Diffusion rely on a process called diffusion to create art. In diffusion, a model learns how to gradually subtract noise from a starting image made entirely of noise — moving it closer step by step to the target prompt.

Beyond image generation and editing, CM3LEON has the ability in text tasks of summarization, translation, and sentiment analysis for a given image.

Experts forecast a near future where AI systems will seamlessly navigate the realms of comprehension, editing, and generation across various mediums, including images, videos, and text.

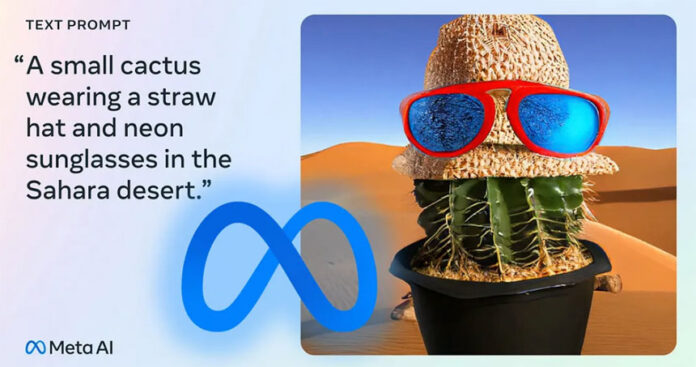

Meta’s image generator. Image Credits: Meta

The DALL-E 2 results. Image Credits: DALL-E 2

En Español

En Español