IBL News | New York

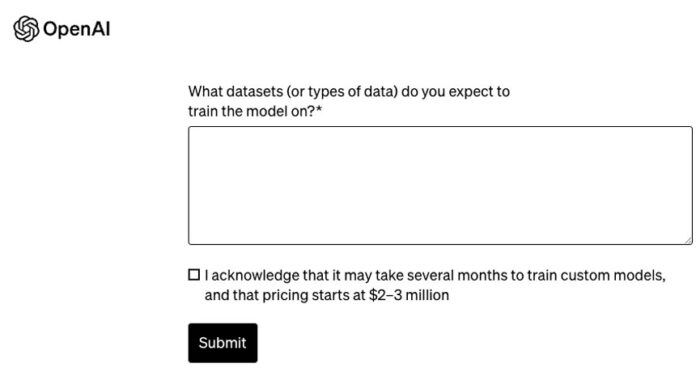

Training a custom model from scratch using OpenAI’s GPT-4 may take several months, with pricing starting at $2 to $3 million, according to the company.

This high price sparked a discussion among practitioners on Twitter, now known as X. Many users agreed that a much smaller pre-trained base model with fine-tuning on top of it would cost ten times less.

OpenAI justifies the price by stating:

“The Custom Models program gives selected organizations an opportunity to work with a dedicated group of OpenAI researchers to train custom GPT-4 models to their specific domain.”

“This includes modifying every step of the model training process, from doing additional domain-specific pre-training to running a custom RL post-training process tailored for the specific domain.”

“Organizations will have exclusive access to their custom models. This program is particularly applicable to domains with extremely large proprietary datasets—billions of tokens at minimum.”

On the other hand, OpenAI announced Data Partnerships, an initiative intended to work with organizations to produce public and private datasets for training AI models as a way to combat models that contain toxic language and biases.

To work with data and PDFs in those large-scale datasets, OpenAI says that it uses world-class OCR technology and automatic speech recognition (ASR) to transcribe spoken words.

.

It takes $2-$3M to train a custom model from scratch using OpenAI!!

You rarely, if ever, need to go this route. Yes, even if you are a rich F500 who has money to burn 🤣

Instead, you take a much smaller pre-trained base model and fine-tune on top of it.

You can do this… pic.twitter.com/pYQ67y0Vlj

— Bindu Reddy (@bindureddy) November 7, 2023

Training custom GPT-4 for your organization costs around $2-3 millions and take several months 😱

Worth it? pic.twitter.com/PykduP7GZR

— Shubham Saboo (@Saboo_Shubham_) November 7, 2023

I had lots of fun at OpenAI Dev Day.

I left very inspired by what’s possible ahead.

Here is a summary of my 5 key takeaways based on conversations and presentations:

– Prompt Engineering, RAG, and Finetuning can all be leveraged but which one you use and when you use it… pic.twitter.com/cRYDKzc4Za

— elvis (@omarsar0) November 8, 2023

OpenAI’s fine-tuning UI is now available! 🚀

We can now directly view our previously fine-tuned models in the dashboard, and soon you will be able to train them through the UI.

Additionally, they have increased the concurrent training limit from 1 to 3, allowing you to… pic.twitter.com/cs38V5BLJI

— Itamar Golan 🤓 (@ItakGol) September 21, 2023

OpenLetter to Sam Altman

Dear @sama

Merry Christmas. How’s the eggnog ?

I want to bring your attention to the fact that Microsoft defacto owns OpenAI now, and either you break out of that control structure entirely, or you become Corporate Vice President of Microsoft AI in 2…

— Varun (@varun_mathur) December 25, 2023

En Español

En Español