OpenAI Trains Models to Explain Themselves Better

August 2, 2024

IBL News | New York

OpenAI researchers released a new scientific paper this month revealing a new algorithm by which OpenAI’s GPT-4 and other LLMs can learn to explain them better to users.

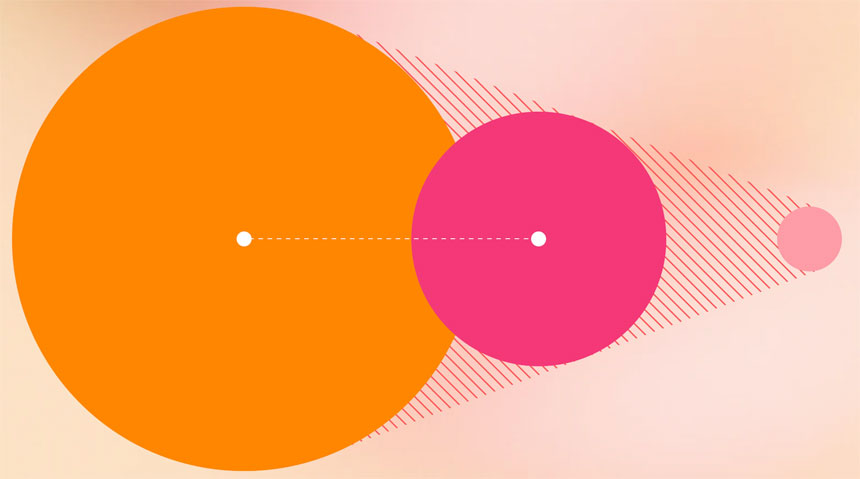

The paper “Prover-Verifier Games Improve Legibility of LLM Outputs” explains how OpenAI is improving the legibility of LLM outputs.

“Understanding and addressing the performance/legibility balance can lead to more effective and trustworthy AI applications, benefiting a wide range of fields where precise and clear communication is essential,” say the researchers.

OpenAI’s researchers used two custom fine-tuned GPT-4 family models and had them engage in several rounds of the game, in which they were asked to answer grade school math word problems with known answers.

Discover more

IBL News is funded by the New York-based, family-owned company ibl.ai. Our stories adhere to the highest ethical standards in journalism and are available to news syndication agencies.